By Guy Rosen, VP of Product Management

People will only be comfortable sharing on Facebook if they feel safe. So over the last two years, we’ve invested heavily in technology and people to more effectively remove bad content from our services. This spring, for the first time, we published the internal guidelines our review teams use to enforce our Community Standards — so our community can better understand what’s allowed on Facebook, and why. And in May we published numbers showing how much violating content we have detected on our service, so that people can judge for themselves how well we are doing.

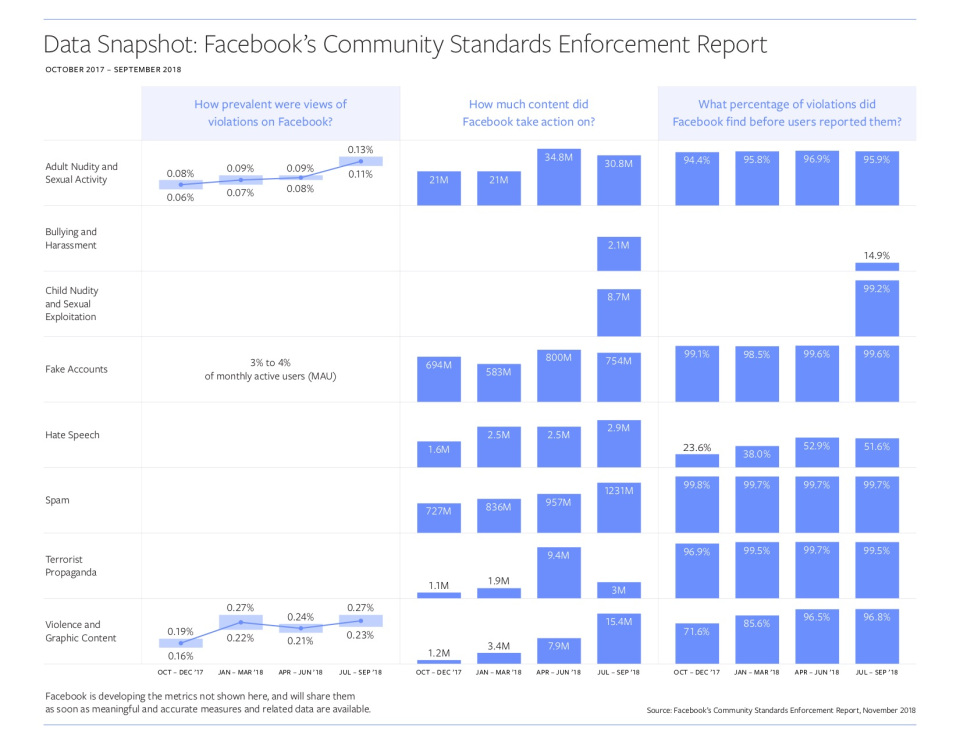

Today, we’re publishing our second Community Standards Enforcement Report. This second report shows our enforcement efforts on our policies against adult nudity and sexual activity, fake accounts, hate speech, spam, terrorist propaganda, and violence and graphic content, for the six months from April 2018 to September 2018. The report also includes two new categories of data — bullying and harassment, and child nudity and sexual exploitation of children.

Finding Content That Violates Our Standards

We are getting better at proactively identifying violating content before anyone reports it, specifically for hate speech and violence and graphic content. But there are still areas where we have more work to do.

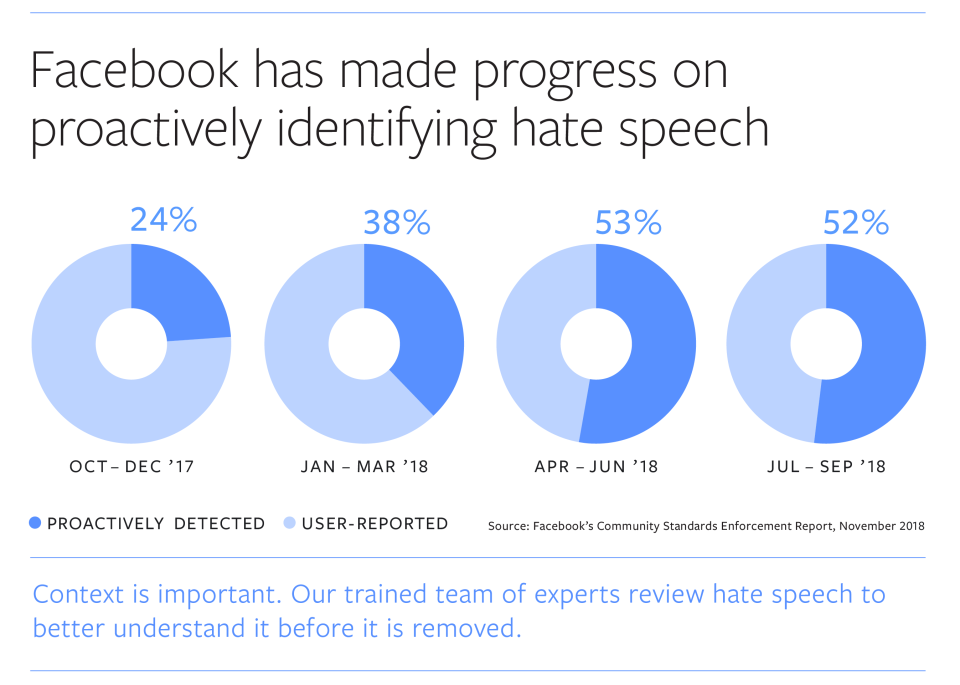

- Since our last report, the amount of hate speech we detect proactively, before anyone reports it, has more than doubled from 24% to 52%. The majority of posts that we take down for hate speech are posts that we’ve found before anyone reported them to us. This is incredibly important work and we continue to invest heavily where our work is in the early stages — and to improve our performance in less widely used languages.

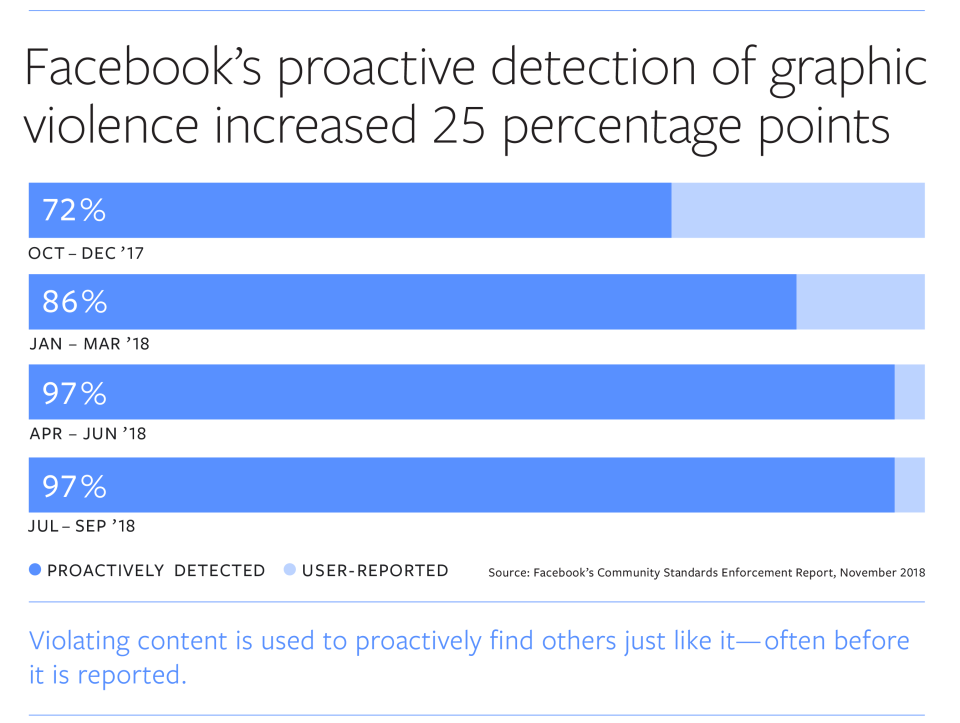

- Our proactive detection rate for violence and graphic content increased 25 percentage points — from 72% to 97%.

(Updated above graph on November 19, 2018 at 10:30AM PT to correct percent of hate speech proactively identified between January and March 2018.)

Removing Content and Accounts That Violate Our Standards

We’re not only getting better at finding bad content, we’re also taking more of it down. In Q3 2018, we took action on 15.4 million pieces of violent and graphic content. This included removing content, putting a warning screen over it, disabling the offending account and/or escalating content to law enforcement. This is more than 10 times the amount we took action on in Q4 2017. This increase was due to continued improvements in our technology that allows us to automatically apply the same action on extremely similar or identical content. Also, as we announced last week, there was a significant increase in the amount of terrorist content we removed in Q2 of 2018. We expanded the use of our media matching system — technology that can proactively detect photos that are extremely similar to violating content already in our database — to delete old images of terrorist propaganda. Some of this increase was also due to fixing a bug that prevented us from removing some content that violated our policies.

We also took down more fake accounts in Q2 and Q3 than in previous quarters, 800 million and 754 million respectively. Most of these fake accounts were the result of commercially motivated spam attacks trying to create fake accounts in bulk. Because we are able to remove most of these accounts within minutes of registration, the prevalence of fake accounts on Facebook remained steady at 3% to 4% of monthly active users as reported in our Q3 earnings.

Adding New Categories

For the two new categories we’ve added to this report — bullying and harassment and child nudity and sexual exploitation of children — the data will serve as a starting point so we can measure our progress on these violations over time as well.

Bullying and harassment tend to be personal and context-specific, so in many instances we need a person to report this behavior to us before we can identify or remove it. This results in a lower proactive detection rate than other types of violations. In the last quarter, we took action on 2.1 million pieces of content that violated our policies for bullying and harassment — removing 15% of it before it was reported. We proactively discovered this content while searching for other types of violations. The fact that victims typically have to report this content before we can take action can be upsetting for them. We are determined to improve our understanding of these types of abuses so we can get better at proactively detecting them.

Our Community Standards ban child exploitation. But to avoid potential for abuse, we remove nonsexual content as well, for example innocent photos of children in the bath — which in another context could easily be misused. In the last quarter alone, we removed 8.7 million pieces of content that violated our child nudity or sexual exploitation of children policies — 99% were identified before anyone reported them. We also recently announced new technology to fight child exploitation on Facebook, that I’m confident will help us identify more illegal content even faster.

Overall, we know we have a lot more work to do when it comes to preventing abuse on Facebook. Machine learning and artificial intelligence will continue to help us detect and remove bad content. Measuring our progress is also crucial because it keeps our teams focused on the challenge and accountable to our work. To help us evaluate our process and data methodologies we have been working with the Data Transparency Advisory Group (DTAG), a group of measurement and governance experts. We will continue to improve this data over time, so it’s more accurate and meaningful.

You can see the updated Community Standards Enforcement Report and updated information about government requests and IP takedowns here. Both reports will be available in more than 15 languages in early 2019.

Here’s Mark Zuckerberg’s note on this topic::